- The Generative Path

- Posts

- Wan 2.6, Seedance & Midjourney Hacks

Wan 2.6, Seedance & Midjourney Hacks

Hello Friend,

Welcome back to the Generative Path, your weekly infusion of AI-powered creativity curated by ZenRobot to keep you ahead of the curve with the latest tools, trends, and techniques.

Just announced: registration is open for March’s Online Masterclass - Gen AI for Content Creation. If you’re ready to create smarter, faster, and more strategically using AI, this Masterclass will give you practical frameworks and hands-on guidance you can apply right away. Seats are now available, and there will be no Masterclass in April, so this is your opportunity to get ahead before the next break in sessions. Secure your spot and join us in March. Want the full rundown? Get the info pack here.

This week, we’re looking at how generative video is maturing. Wan 2.6 leads the way, offering open-source cinematic control with stronger motion consistency and scene stability, making it a serious tool for story-driven work. We’ve also got a quick Midjourney workflow upgrade that saves time when managing large batches, a spotlight on Jyo John Mulloor’s playful AI worlds, and a fresh pulse of energy with Gramatik Radio. Plus, a look at Seedance 2.0 and another round of AI vs. Reality to keep your instincts sharp. Let’s get into it.

Feature of the Week: Wan 2.6

Wan 2.6 is the latest update to open source video generation, and it is quickly becoming a serious option for creators who want cinematic control without a closed ecosystem. Built for text to video, image to video, and video extension workflows, Wan 2.6 focuses on motion consistency, scene coherence, and realistic camera behavior.

What makes Wan 2.6 stand out is how well it handles movement over time. Characters stay on model, environments remain stable, and camera motion feels intentional rather than chaotic. This makes it especially useful for story driven content like short films, concept trailers, previs, and mood explorations where continuity matters.

For filmmakers, designers, and experimental studios, Wan 2.6 is a signal of where generative video is heading. Less novelty, more craft. Less one off clips, more usable scenes. It is not about replacing production, but about accelerating ideation and giving creators a new way to think in motion.

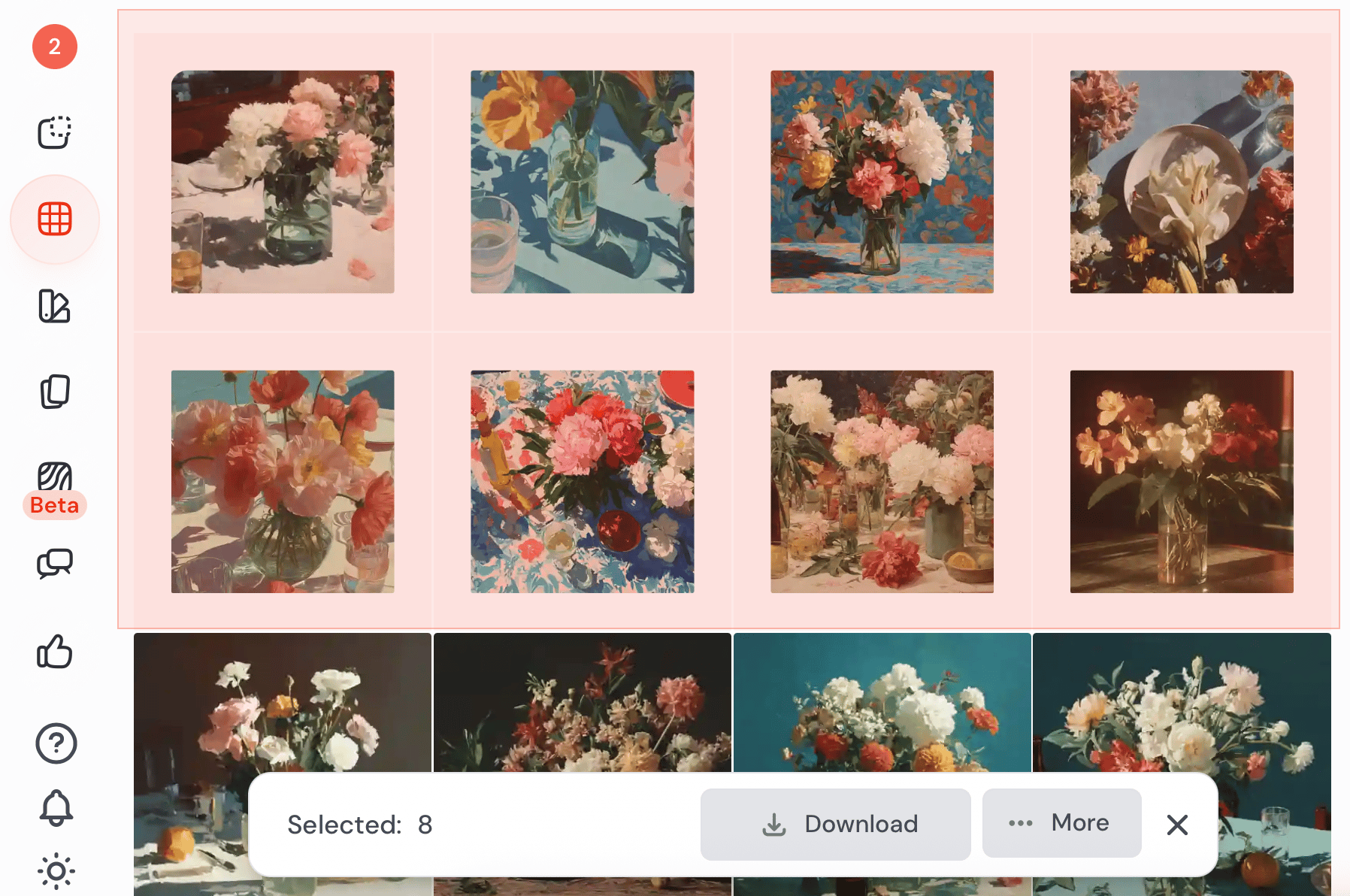

Creative Hack: Midjourney Multi Image Download

Midjourney’s Organise View now makes downloading images much faster. Instead of opening each image individually, switch to Organise to see all your generations in a clean grid. From there, simply select any set of images and save them directly to your machine.

This is perfect when you are working through large batches of generations and need to collect selects quickly. No extra clicks, no opening image pages, just scan, choose, and save.

Community Spotlight: Jyo John Mulloor

Jyo is an AI Creative Director and digital artist exploring generative tools to build playful, futuristic worlds. His work blends animals, surreal role reversals, and meme driven humor, often flipping the familiar to spark curiosity. Backed by years of commercial experience, he uses AI to experiment freely, create striking visuals, and rethink how stories and ideas can be seen today.

This Week’s Flow State: Gramatik Radio

Turn up the groove with Gramatik Radio on Spotify. Blending funk-driven basslines, hip-hop rhythms, and smooth electronic production, this playlist brings a confident, upbeat energy that’s perfect for creative momentum. It’s lively without being overwhelming, keeping your focus sharp while your head nods along. Press play and let the groove move your workflow forward.

AI Trend: Seedance 2.0

Seedance 2.0 is quickly becoming one of the most talked-about video models online. Creators are sharing mind-bending generations across X and LinkedIn, from hyper-cinematic action shots to stylized character moments that feel pulled straight from a film set.

What stands out is the consistency. Motion feels intentional. Lighting holds. Characters maintain identity across shots. It is not just eye candy. It signals how fast AI video is closing the gap between concept and production.

If you have been watching from the sidelines, now is the time to experiment. The internet is already showing what is possible.

AI vs. Reality: Can You Tell the Difference?

This week, we’ve got a brand-new pair of images to test your perception again. Think you can tell which is AI-made and which is the real deal? Take your guess and we’ll reveal the answer in the next issue!

Last week, we kicked off our AI vs. Reality face-off with raging fires, and now for the moment of truth, if you guessed the right one was the real deal, you got it right. The left image was created with Midjourney, demonstrating how convincingly it can replicate real-world lighting, texture, and depth.

Prompt: Burning building at night

Join the Conversation! AI is constantly evolving, unlocking new creative possibilities every day. What caught your attention this week? Hit reply and let us know. We’d love to hear from you.

Follow ZenRobot for more AI insights, and if you found this interesting, share it with someone who would too.

What did you think of today’s email? 🤖 ZenRobot wants to learn from you! |