- The Generative Path

- Posts

- ElevenLabs Nodes, Realism Workflows & Clay AI Films

ElevenLabs Nodes, Realism Workflows & Clay AI Films

Hello Friend,

Welcome back to the Generative Path, your weekly infusion of AI-powered creativity curated by ZenRobot to keep you ahead of the curve with the latest tools, trends, and techniques.

Huge Giveaway Alert! We’re officially 1 year into The Generative Path! To celebrate we’re giving lifetime access to three new members who join our Gen AI Academy on Skool in the next 7 days. If you’ve been watching from the sidelines, now’s the opportunity to reach out for the learning experience of a lifetime. Inside the Academy, we focus on practical tools, creative workflows, and learning by doing, not just watching. Check it out!

This week is all about control in the creative process. ElevenLabs’ new node-based workflow turns audio generation into something you can build, tweak, and scale with precision. We’re also sharing a simple workflow to push Midjourney images into photorealism, spotlighting Jonathan Tait’s cinematic AI work, and looking at how structured pipelines are powering a new wave of AI stop-motion content. Add Sola Rosa Radio and a fresh AI vs. Reality challenge, and you’re ready to create with more intention.

Feature of the Week: Eleven Labs - Node Based

ElevenLabs is expanding beyond simple text-to-speech with a node-based workflow that gives creators more control over how audio is generated and shaped. Instead of relying on a single prompt, you can build structured pipelines where each step handles a specific task like generating voice, adjusting tone, or refining output.

You can tweak one part of the chain without redoing everything, and reuse proven workflows across projects. For creators working on narration, character voices, or audio content at scale, this means faster iteration and more consistent results. It shifts ElevenLabs from a one-off generation tool into a system you can design around. It is a practical step toward more production-ready AI audio, where control matters as much as output.

Creative Hack: Realism Work Flow

Want to boost your Midjourney creation’s realism? Start by generating your base image in Midjourney. Focus on getting the composition, characters, and overall style exactly where you want them. This image becomes your visual blueprint, where layout, identity, and storytelling are already locked in.

Next, bring that image into Nano Banana and use a prompt like: “keep the style, characters, and composition of the image, but make everything look more realistic.” The goal here is not to redesign, but to refine. You are guiding the model to enhance realism while preserving the original structure and intent.

This approach works because modern image models respond strongly to structure and constraints. By clearly separating creation (Midjourney) from refinement (Nano Banana), you gain both creative control and visual fidelity. The result is a polished, photoreal version of your original concept, without losing the core idea that made it work in the first place.

Community Spotlight: Jonathan Tait

Jonathan Tait is a multidisciplinary artist exploring the intersection of AI, narrative, and visual texture across both digital and physical mediums. Working with tools like Midjourney, ComfyUI, Blender, and Unreal Engine, he creates cinematic visuals that feel emotionally grounded, even when they lean into the surreal. With a background in filmmaking and post-production, his work blurs categories, moving between fashion, sculpture, and generative storytelling, and showing what can happen when you invite the machine into the creative process.

This Week’s Flow State: Sola Rosa Radio

Ease into the groove of Sola Rosa Radio on Spotify. Blending soulful rhythms, funk-infused beats, and smooth electronic textures, this playlist brings a warm, rhythmic energy that feels both relaxed and alive. It’s a steady companion for creative work, light focus, or simply lifting the mood without distraction. Press play and let the groove carry you through.

AI Trend: Stop Motion Style with AI

Zain Ul Abedien highlights just how far AI workflows have come. What began as quirky visuals in 2022 has evolved into full clay-style stop-motion ad creatives generated in under 15 minutes, using structured pipelines and node-based systems.

What stands out is the consistency. By building repeatable workflows, creators can maintain character design, lighting, and motion across sequences, producing stop-motion style films that feel cohesive and surprisingly high quality. It’s not just fast experimentation anymore, it’s controlled, production-ready output. As these “imagine-to-ad” pipelines mature, they’re opening the door for brands to generate cinematic, stylized campaigns at a fraction of the time and cost.

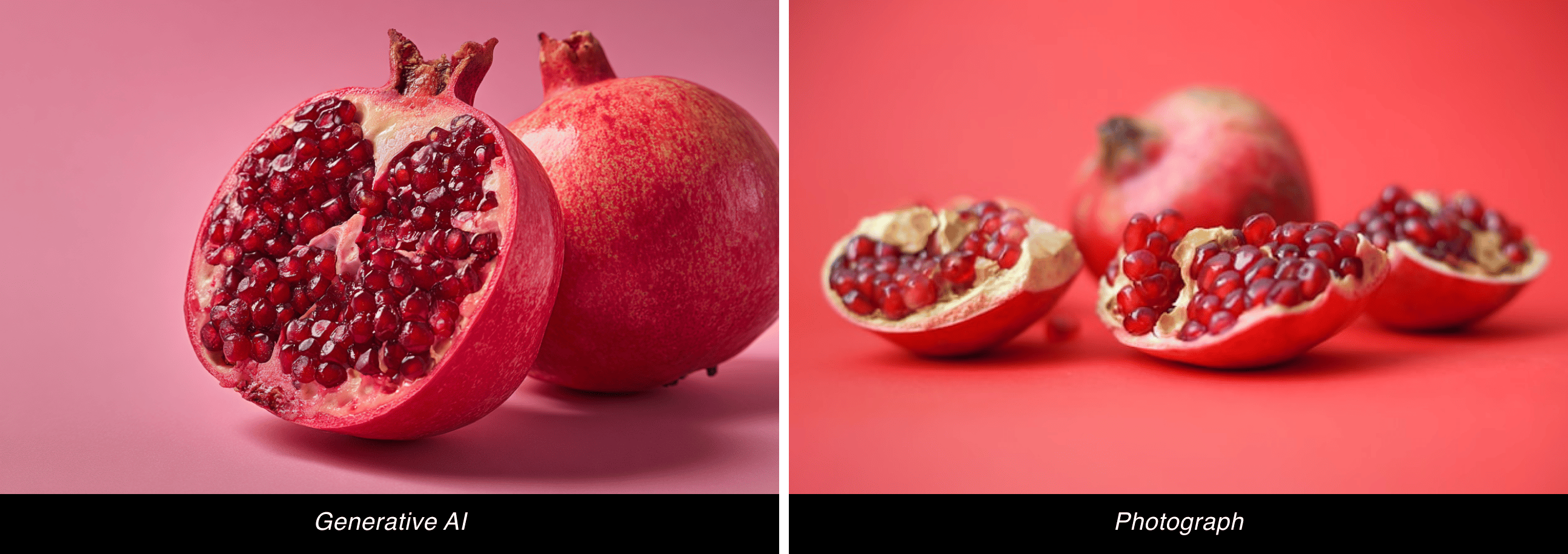

AI vs. Reality: Can You Tell the Difference?

This week, we’ve got a brand-new pair of images to test your perception again. Think you can tell which is AI-made and which is the real deal? Take your guess and we’ll reveal the answer in the next issue!

Last week, we kicked off our AI vs. Reality face-off with pomegranates, and now for the moment of truth, if you guessed the right one was the real deal, you got it right. The left image was created with Midjourney showing off the program’s amazing ability to generate real looking images.

Join the Conversation! AI is constantly evolving, unlocking new creative possibilities every day. What caught your attention this week? Hit reply and let us know. We’d love to hear from you.

Follow ZenRobot for more AI insights, and if you found this interesting, share it with someone who would too.

What did you think of today’s email? 🤖 ZenRobot wants to learn from you! |